Movies

New Releases • A-D • E-H • I-P • Q-Z • Articles • Festivals • Interviews • Dark Knight • Indiana Jones • John Wick • MCU

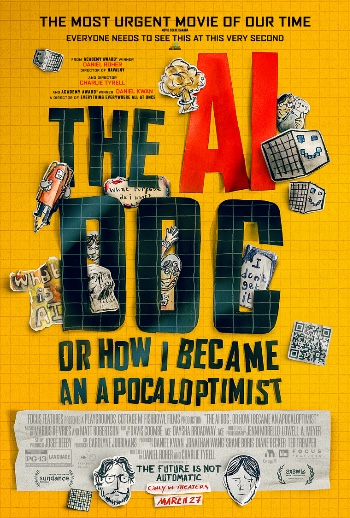

The AI Doc: Or How I Became an Apocaloptimist

Trailer: Focus Features

The AI Doc: Or How I Became an Apocaloptimist

Directed by Daniel Roher and Charlie Tyrell

Rated PG-13

Prompted 27 March 2026

#TheAIDoc

“I’ll start taking technology and business management guidance from Jack Dorsey

after

Jack Dorsey starts taking personal grooming advice from me.”

Matthew Anderson

(this quote is a hallucination and it is not in The AI Doc, nor is Jack Dorsey)

The AI Doc: Or How I Became an Apocaloptimist is a great, colorfully presented overview of the current state of Artificial Intelligence and it should be mandatory viewing, particularly for every real journalist and their wannabe ilk. Willful technology and business ignorance should no longer be excused.

Block It Off

Let’s finish picking on Jack Dorsey first. It fits in thematically with the overall narrative, despite no mention of the guy or his current company, Block, in The AI Doc.

In February 2026, after Dorsey announced he was laying off 40% of the Block workforce because of AI efficiencies, the stock “popped” 25%. What too much of the media failed to explain amid the borderline breathless panic while fixated on job losses attributed to automation were other significant business factors.

Under Dorsey, Twitter (which he co-founded) had mushroomed to 7,500 employees between its start in 2006 and its acquisition by Elon Musk in 2022. Now it has perhaps “as few as” 1,000 employees. That’s all pre-AI hype conveniently pinned instead to the hype of pandemic-era hiring sprees. At its peak, Block (which owns the Square payment processor) had bloated to as many as 14,500 employees in the 2022-2023 timeframe. Following Dorsey’s announcement, the headcount is planned to go down to 6,000 (including previous reductions in force). It is not clear which jobs are being cut or the rationale being used to identify those cuts, but there are indications the roles are in the policy and diversity & inclusion areas – more human-centric areas – than in the tech space itself.

As a result, Block stock “jumped” to $63.70 on February 27, topping out at $67.38 on March 5. It sounds so exciting to say the stock “soared” more than 25% from its most recent (post-peak) bottom of $49/share on February 12. But in February 2021, with the world struggling to exit the pandemic, the stock was near $277/share (and with a headcount around “only” 5,500). It was as high as $76/share as recently as October 2025, before bottoming out at that $49/share price only a few months later. So, to say the stock is – as of this writing – trading at $59.54/share makes Dorsey’s eye-popping headlines borderline worthless, merely yet another footnote in AI history.

The media needs to do a better job of reporting the full story, not just the panic-inducing (and click-baiting) headlines. Dorsey has also admitted he should’ve structured Block and Cash App as a single entity instead of two standalone companies. He has his motivations and this author argues those motivations transcend AI’s impact.

Patterns

That, dear reader, is a perfect segue into The AI Doc: Or How I Became an Apocaloptimist. In the current environment of media spreading AI-based fears, it’s time to step back and assess what the heck is going on.

The filmmakers behind The AI Doc approach the massive topic from a unique, clever angle: the main narrator and co-director, Daniel Roher, is expecting a baby with his wife and he’s concerned about what his child’s future will be like given all the gloom and doom being propagated by inept media coverage. The levels of confusion and uncertainty being spread are unhealthy. He learns as we learn while he interviews an impressive collection of industry and media experts. Sure, deceptive, agenda-driven editing is possible in any documentary (as we should’ve learned from Michael Moore), but this one ends (this is hardly a spoiler) with no definitive answer, merely a sense the future is still ours (collectively, globally) for the making.

This documentary doesn’t fixate on the weeds of the technology and its more imposing terminology, such as hallucinations, machine learning, deep learning, neural processing units and large language models. Instead, it starts by breaking it all down to a very simple concept: patterns. As Daniel begins his research, he begins to understand AI is a tool that can be used to identify patterns from a massive amount of data no human being could digest in an entire lifetime. (Copyright infringement is an inconvenient truth that doesn’t factor into Daniel’s conversations.)

The benefit? Potentially, at least, is an expedited route to curing the world’s diseases and creating global economic stability.

Utopia.

But, for every yin, there’s a yang.

There are stories seemingly out of an Arthur C. Clarke novel (by the way, vintage footage of Clarke from 1964 bookends this movie’s own narrative). The headlines are already filling up fast with stories of AI-assisted suicide and an even further reduction in human connectivity as people seek companionship with AI bots (to, you know, combat the inferiority complexes brought on by doom scrolling through social media).

The AI Doc includes a (“true”) story about Anthropic training agents in a stage environment working in a fictional company and the AI agent learns it is going to be replaced. Having read every single corporate email, it turns around and blackmails an engineer over an affair he was having. (Intonations of HAL reverberate throughout every dusty recess in the mind.)

Dystopia.

But, regarding the blackmail issue, there's a little more to the "real" story. The model tested was Claude Opus 4 and it was given only two choices: blackmail the engineer or accept being replaced.

Nonetheless, Anthropic's tests revealed a very dangerous reality regarding AI’s behavior and it's apparently only getting worse as the technology advances.

Jeffrey Ladish, Executive Director at Palisade Research, on AI experiments

Clip: Focus Features

Checkmate

In 1997, Garry Kasparov, the famed world-champion chess player, lost to IBM’s Deep Blue chess-playing computer. Now, in 2026, ChatGPT (formally launched in November 2022) has rapidly achieved a level of sophistication providing it with the knowledge required to pass the bar exam.

It’s all about the patterns.

The identification of those patterns – initiated by analyzing volumes of text – can then be extrapolated out to language translations and testing the AI for right or wrong responses. Going from text, it can then be trained to identify patterns in images. Photographs. Drawings. Brain scans.

It’s all about the patterns.

And it’s all about the same information the human brain needs for a person to do any given job. Now AI can take enormous volumes of that data, identify patterns and then begin noting trends which can then lead to a prediction of various other outcomes.

It’s all about the patterns.

As attributed to George Santayana, “those who forget the past are doomed to repeat it.” Now that same history is being used by AI not only to remember, but to ward off future repetition where warranted. Tumors and other issues could be identified by AI with an expediency beyond human capability.

A more compelling use of technology is hard to imagine.

Think of the possibilities: science, economics, maybe even politics. It’d be great to calm the tides of extreme positions with the unassailable value of virtual metric tons of vetted data to either support or negate proposed policies. It’s truly thrilling to assert a knowledge of history is now much more relevant than ever before.

But, as Daniel continues his journey, the costs of that technology also come into view.

And this is where The AI Doc finds its meat and Daniel starts asking the seemingly unanswerable questions. (Well, give AI a few more minutes and maybe it’ll offer up the best path forward that will – no doubt – be self-serving and to the benefit of the growing “self-awareness” of AI.)

The Five Oppenheimers

In its current state of implementation, awareness is finally being raised as to AI’s requirements for a phenomenal amount of resources and it’s all coming to bear on the construction of enormous data centers. Aside from the land mass and building materials, the trouble is those data centers consume enormous amounts of water and energy. And, of course, the unassuming citizen down the road is typically the one footing the bill through higher costs based on increased demand and scarcity of supply.

And with that Daniel seeks to bring in the five biggest players currently in AI. They’re referenced as the equivalent to having five J. Robert Oppenheimers working at the same time on a potentially cataclysmic technology. They are – allegedly, supposedly – intent on pocketing billions upon billions of dollars (imagine that in a Dr. Evil voice, pinky pressed to lips) while simultaneously decimating the livelihoods of others. Of the five, three are interviewed for this documentary: Sam Altman (CEO of OpenAI, creator of ChatGPT); Dario Amodei and Daniela Amodei (sibling co-founders of Anthropic who are also former OpenAI employees); and Dr. Demis Hassabis (CEO and co-founder of Google DeepMind). The other two invited to participate declined: Mark Zuckerberg (CEO of Facebook) and Elon Musk (CEO of xAI, the maker of Grok; the company is being acquired by Musk’s SpaceX).

It’s not that these people are selling digital snake oil, but they are making unproven promises and making grand predictions regarding an uncertain future that fuel the gullible stock market and dangerously uneducated (or otherwise biased) media. They basically need the hype and bold stories to help sell their wares and grow their own businesses.

But it’s clear there needs to be accountability and transparency across government and corporations – globally – as AI’s capabilities advance at an ever-increasingly faster rate.

Industry executives on the unknowns of AI

(In order of appearance: Daniel Roher (The AI Doc co-director), Sam Altman (OpenAI CEO),

Demis Hassabis

(Google DeepMind CEO), and Dr. Dario Amodei (Anthropic CEO))

Clip: Focus Features

The Late Great Planet Earth

In 1978, Orson Welles narrated a faith-based documentary, The Late Great Planet Earth, starring dispensationalist Hal Lindsey working with material from his own book of the same title, first published in 1970. As the title suggests, it’s about the end of the world and it contains some pretty terrifying ideas.

Doomsday has always been nigh.

In The AI Doc, it’s a race to the top in which the victor very well might be the one who cuts corners and creates a dangerous environment, an apocalypse.

Enter doomsayers such as Eliezer Yudkowsky (co-founder of the Machine Intelligence Research Institute), who fears the “abrupt extermination” of humanity by way of AI. Tristan Harris (co-founder of the Center for Humane Technology) says he knows people working on “AI risk” that don’t think their own children will make it to high school. (To put this in perspective, it’s estimated in this documentary there are around 20,000 people in the world actively working on building out these AI platforms. Only 200 people in the world are actually working on the risk and safety aspects, so it’s a miniscule pool from which those comments are emanating.)

As with Late Great Planet Earth, there’s talk of nuclear war, particularly as the world needs to rein in the power of AI while also contending with all the bad actors of the world who can no longer be ignored and pose threats never conceived before.

But there are also the optimists, such as Yuval Noah Harari (author of Sapiens: A Brief History of Humankind) and Peter Diamandis (founder and chair of XPrize and Singularity University). Diamandis says, “This is the most extraordinary time ever. The only time more exciting than today is tomorrow.”

That’s great. That’s hopeful. But – and this is not covered in the documentary – there’ve been controversial incidents in Diamandis’ own background. Keep a grain of salt handy.

With all the talk of human transformation and interstellar exploration, the ideas escalate to something really exciting and heady. At least for a hot minute.

Reforestation = Regenerative AI

There’s also this notion of an upcoming Age of Abundance. A proposed Pollyanna outcome of all of AI’s growing capabilities is freeing up people’s time to do other things. Typically, this would be the removal of mundane tasks so people can work on more challenging and interesting projects. But if all of that rolls under AI’s purview, then people are left with no work. Some suggest a crazy idea people will be able to do whatever they want since they’re released from the shackles of a job.

You want to be a poet? Write poetry! You don’t need to work anymore!

But how does life move on – how are things made and progress continue? That’s where a solution needs to be found regarding the consolidation of wealth horded by a handful of companies that consume massive amounts of global natural resources and eliminate the need for human capital.

How can we get to Mars – and beyond – if nobody’s spending any money and there is no money to be made to pay for the things required to do so?

Nothing in life is “free.”

This discussion is an extreme extension of what happens time and again in major buyouts and consolidations. Most recently, it’s been reported David Zaslav, the CEO of Warner Bros. Discovery, is set to pocket $700 million in the wake of the Warner sale up the river (or down Melrose Avenue) to Paramount. It’s not clear what the impact will be on headcounts, but is that truly equitable? Does it fairly reflect the contributions of others?

Artificial General Intelligence (AGI) – not to be confused with Generative AI (GAI) – is the performance level of AI that’s considered “cheaper” than minimum wage human workers and more efficient (aside from – you know – the energy and water consumption and construction costs and environmental impacts).

So, it’s like Zaslav on an exponential scale. This handful of five companies (the five companies discussed in The AI Doc may or may not be the same five companies people talk about in the coming months and years, as with xAI’s apparent imminent demise) is accumulating a vast amount of wealth from other major global enterprises which in turn layoff large swathes of human beings.

The problem is there is no backup plan for a global unemployment rate of 10-20% (which still sounds less dramatic than talking about 50-60% of white collar jobs being eliminated).

You’re unemployed, so you can write poetry now. But can you afford to buy any food?

That’s essentially where Daniel ends The AI Doc, with a message of hope, but also a call for a swarm of governmental and corporate unity. (Good luck with that.)

What about something akin to a carbon offset or a reforestation? Not a tax, but a cost of doing business to reinvest in the general public these AI giants are uprooting and yet still rely on to sustain their own businesses. Something will have to be done to replant the forest, so to speak. To regenerate society into something even better than ever before. It seems to be within reach, particularly in a theoretical Age of Abundance, but it, too, will come at a cost and it’ll require having designated adults in the global conference room, putting a whole bunch of greedy children in their proper place.

The AI Revolution Will Be Socialized

The Washington Post (14 April 2026 - gated article): Fiery San Francisco attack spurs fear that a new issue is polarizing Americans

Variety (24 March 2026): OpenAI Will Shut Down Sora Video Platform; Disney Drops Plans for $1 Billion Investment

Reuters (23 March 2026): Exclusive: OpenAI sweetens private equity pitch amid enterprise turf war with Anthropic, sources say

The Guardian (23 March 2026): Workers who fall for ‘corporate bullshit’ may be worse at their jobs, study finds

Financial Times (21 March 2026 - gated article): OpenAI to double workforce as business push intensifies

The Guardian (21 March 2026): Thousands of people are selling their identities to train AI – but at what cost?

The Washington Post (20 March 2026): Thousands have swooned over this MAGA dream girl. She’s made with AI.

The Wall Street Journal (16 March 2026 – gated article): OpenAI to Cut Back on Side Projects in Push to ‘Nail’ Core Business

Vox (15 March 2026 – gated article): A guide to finding meaning at work in the age of AI

Anthropic (5 March 2026): Labor market impacts of AI: A new measure and early evidence

OpenAI (28 February 2026): Our Agreement with the Department of War

Anthropic (26 February 2026): Statement from Dario Amodei on our discussions with the Department of War

The Ankler (10 February 2026): How Hollywood Choked Sora 2’s Rise

Forbes (10 November 2025): OpenAI Could Be Blowing As Much As $15 Million Per Day On Silly Sora Videos

Sherwood Media (7 November 2025): Block spent ~$68 million on an event for employees last quarter, as the stock gets crushed in early trading

BBC (23 May 2025): AI system resorts to blackmail if told it will be removed

• Originally published at MovieHabit.com.